Over the past year, website owners have started noticing a new kind of traffic spike, not from humans, but from AI bots and large language model (LLM) crawlers. While some of this traffic is legitimate (think search indexing or AI assistants gathering data), a growing portion is aggressive, wasteful, or even malicious.

If left unmanaged, this bot traffic can slow down your site, increase hosting costs, and degrade the experience for real users. The challenge? Blocking bots incorrectly can damage your SEO and visibility.

Here’s how to strike the right balance.

The Rise of AI Bots (and Why They’re a Problem)

Traditional search engine crawlers like Google and Bing have always indexed websites in a relatively predictable and respectful way.

AI crawlers are different.

Companies like OpenAI, Anthropic, and Perplexity AI deploy bots that:

- Crawl large portions of the web rapidly

- Revisit pages frequently

- Sometimes ignore crawl-delay guidelines

- May not always respect robots.txt

On top of that, malicious bots often disguise themselves as legitimate crawlers.

The result:

- Increased server load

- Higher bandwidth costs

- Slower page load times

- Potential downtime

Why You Shouldn’t Just Block Everything

It might be tempting to block all bots outright — but that’s risky.

Search engines rely on crawlers to:

- Index your pages

- Rank your content

- Surface your site in search results

Blocking legitimate bots like Googlebot or Bingbot can:

- Remove your site from search indexes

- Destroy organic traffic

- Hurt long-term discoverability

The goal is not to eliminate bots, it’s to manage them intelligently.

Using Cloudflare to Control Bot Traffic

Platforms like Cloudflare provide powerful tools to filter and control bot traffic without harming SEO.

Here are the most effective strategies:

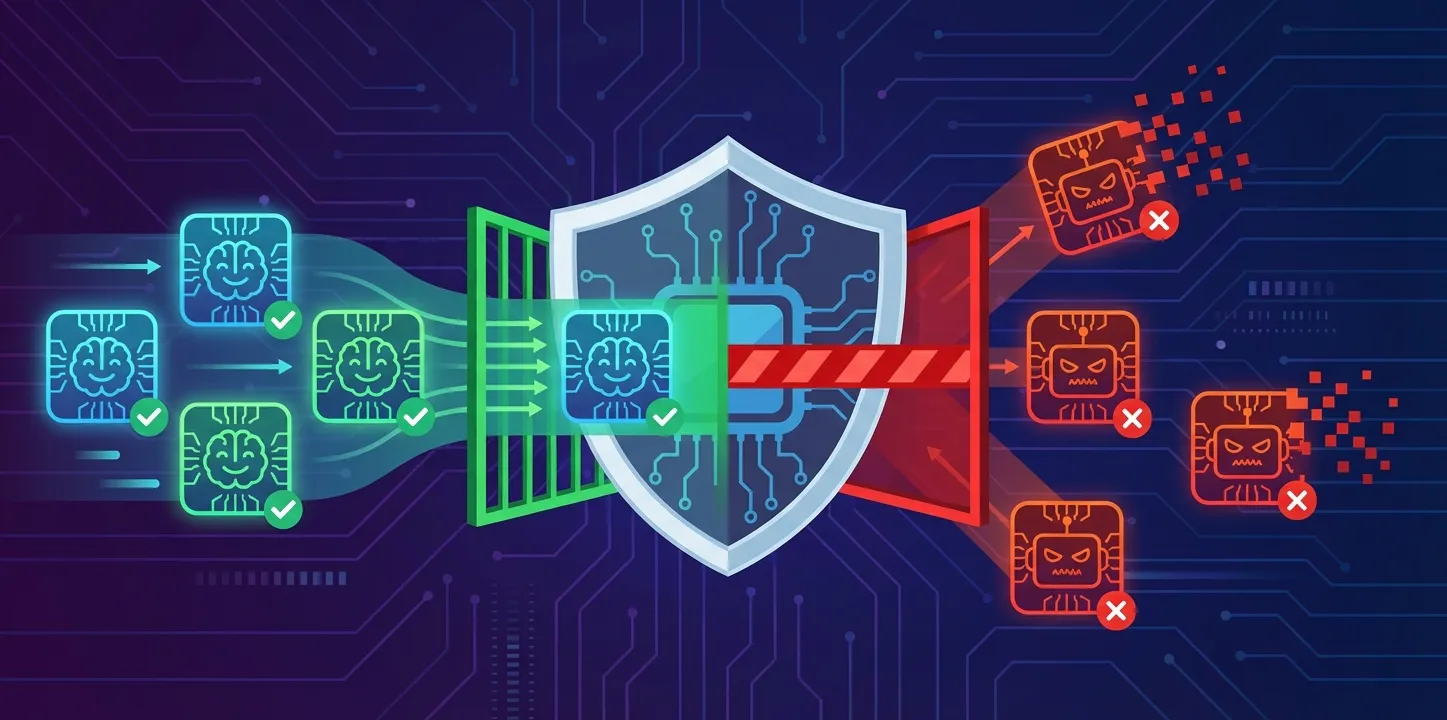

Enable Bot Management

Cloudflare’s Bot Management (or Super Bot Fight Mode on lower tiers) automatically:

- Identifies known good bots (Google, Bing, etc.)

- Scores traffic based on bot likelihood

- Blocks or challenges suspicious requests

This allows you to:

- Let legitimate crawlers through

- Stop malicious or unknown bots

Create Custom Firewall Rules

You can define rules such as:

- Block traffic with suspicious user agents

- Rate-limit repeated requests to the same endpoint

- Challenge traffic from high-risk regions (if relevant)

For example:

- If a bot hits 100+ pages in seconds → challenge or block

- If a request lacks a proper user agent → block

Use Rate Limiting

Rate limiting is one of the most effective tools for stopping aggressive crawlers.

Instead of blocking bots entirely, you:

- Slow them down

- Reduce server strain

- Maintain accessibility for legitimate indexing

This is especially useful for:

- API endpoints

- Search pages

- Dynamic content

Verify Known Bots

Not all bots claiming to be Google or Bing are legitimate.

Cloudflare can:

- Verify IP ranges of real crawlers

- Detect spoofed bots

- Automatically allow verified bots

This ensures:

- SEO bots are not blocked

- Fake bots don’t slip through

Optimize Your robots.txt (But Don’t Rely on It Alone)

Your robots.txt file can guide well-behaved bots but don’t rely on it alone. Use it as a signal, not a defense mechanism.

Google Robots.txt Introduction Guide

Robots.txt Examples and Best Practices

Debugging Robots.txt and Noindex Issues

Add Caching at Every Level

Caching reduces the impact of bot traffic on WordPress.

Use:

- Cloudflare caching

- A WordPress caching plugin

- Optional server-level caching

Plugins like WP Rocket or W3 Total Cache can dramatically reduce load.

When bots hit cached pages, your server does far less work.

Monitor What Bots Are Actually Doing

Use Cloudflare analytics and your WordPress logs to identify patterns.

Watch for:

- Repeated hits to the same endpoints

- High request rates from single IPs

- Crawling of irrelevant pages

Once you see patterns, you can create targeted rules instead of guessing.

Best Practices for WordPress SEO Safety

To keep your rankings safe:

- Always allow verified search engine bots

- Avoid blocking entire countries unless necessary

- Test firewall rules before applying them broadly

- Monitor indexing in Google Search Console

- Focus on reducing crawl waste rather than blocking access

Final Thoughts

AI bots are now a normal part of running a WordPress site. Some are useful. Many are not.

If you ignore them, they will consume resources and slow your site down. If you block them incorrectly, you risk losing your search rankings.

The solution is balance.

With tools like Cloudflare and a properly configured WordPress setup, you can reduce server strain, keep your site fast, and protect your SEO at the same time.

Leave a Reply